Abstract

The rapid adoption of artificial intelligence–driven search systems has significantly changed how users discover information online. Large language models (LLMs) such as ChatGPT, Gemini, Perplexity, and other generative AI platforms increasingly provide synthesized answers instead of traditional lists of web links. This transition has introduced a developing practice commonly referred to as Large Language Model Optimization (LLMO) – the process of structuring digital content to improve its likelihood of inclusion in AI-generated responses.

Introduction

Search engine optimization (SEO) has historically focused on improving website visibility within search engine results pages (SERPs). However, the emergence of generative AI interfaces has shifted information retrieval from keyword-based ranking systems toward conversational answer generation.

Modern AI systems analyze multiple sources simultaneously and generate summarized responses for user queries. As a result, visibility is no longer limited to ranking positions but increasingly depends on whether content is selected, interpreted, and cited within AI-generated answers.

This evolution has led researchers and digital marketers to explore optimization strategies specifically aligned with large language model behavior.

Large Language Models and Information Retrieval

Large language models are machine learning systems trained on extensive datasets containing web content, structured knowledge, and human-generated text. Unlike traditional search engines that display indexed pages, LLM-powered systems attempt to:

- understand user intent,

- retrieve contextual information,

- synthesize responses,

- and present consolidated answers.

Examples of AI-assisted search environments include conversational assistants, AI overview systems, and answer engines integrated into modern search platforms.

These systems prioritize semantic relevance, factual consistency, and contextual authority rather than simple keyword matching.

Emergence of Large Language Model Optimization (LLMO)

Large Language Model Optimization refers to practices aimed at improving how digital content is interpreted and selected by AI systems during response generation.

Industry discussions surrounding related concepts include:

- Generative Engine Optimization (GEO)

- Answer Engine Optimization (AEO)

- AI Search Optimization

- LLM Visibility Optimization

While terminology varies, the central objective remains consistent: enabling content to become machine-understandable and trustworthy for AI summarization processes.

Key Factors Influencing AI Content Inclusion

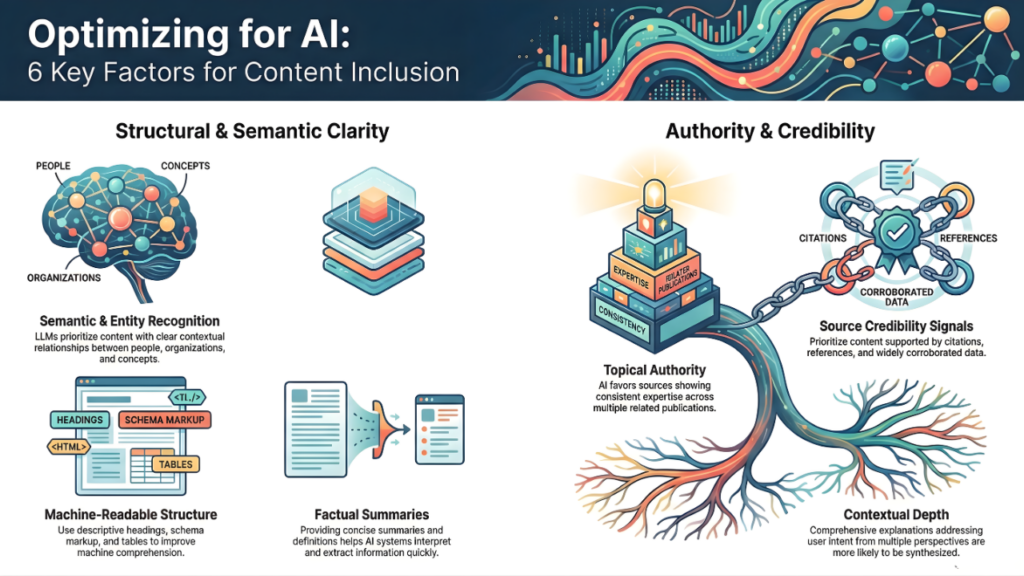

1. Semantic Clarity and Entity Recognition

LLMs rely heavily on contextual relationships between entities such as people, organizations, concepts, and locations. Content structured around clearly defined topics improves interpretability.

2. Topical Authority

AI systems tend to favor sources demonstrating consistent subject expertise across multiple related publications rather than isolated articles.

3. Structured Information

Use of structured formatting improves machine comprehension, including:

- descriptive headings,

- schema markup,

- factual summaries,

- definitions,

- tables and structured explanations.

4. Source Credibility Signals

AI-generated answers often prioritize information supported by citations, references, or widely corroborated sources.

5. Contextual Depth

Comprehensive explanations that address user intent from multiple perspectives are more likely to be referenced during AI synthesis.

Differences Between Traditional SEO and LLM Optimization

| Aspect | Traditional SEO | LLM Optimization |

|---|---|---|

| Primary Output | Ranked links | Generated answers |

| Optimization Target | Search algorithms | Language models |

| Ranking Factor | Keywords & backlinks | Context & authority |

| User Interaction | Clicking results | Conversational queries |

| Visibility | SERP position | AI citation or inclusion |

LLMO does not replace SEO but represents an extension of optimization practices adapted to AI-driven discovery systems.

Role of Structured Data and Knowledge Representation

Structured data enables clearer communication between web content and automated systems. Knowledge graphs, entity relationships, and standardized metadata contribute to improved machine interpretation.

As AI systems increasingly rely on structured understanding rather than isolated documents, knowledge representation has become a critical component of digital visibility.

Industry Adoption and Ongoing Research

Digital marketing professionals and researchers have begun examining how generative AI reshapes content discovery models. Studies and industry analyses suggest that AI-assisted search environments reward informational accuracy, transparency, and topical specialization.

Organizations are increasingly adapting publishing strategies to ensure compatibility with AI-driven search ecosystems.

Challenges and Limitations

Despite growing interest, LLM optimization remains an evolving field. Key challenges include:

- lack of standardized ranking transparency,

- variability across AI systems,

- model training limitations,

- attribution inconsistencies in generated responses.

Because AI outputs are probabilistic rather than deterministic, inclusion cannot be guaranteed through optimization alone.

Future Outlook

As conversational interfaces become integrated into mainstream search experiences, optimization practices are expected to expand beyond webpages toward structured knowledge ecosystems.

Future developments may include:

- AI citation tracking,

- entity-based authority measurement,

- machine-readable publishing standards,

- hybrid search and answer environments.

Large Language Model Optimization is therefore viewed as a continuation of search evolution rather than a replacement of existing digital marketing methodologies.

FAQs – Large Language Model Optimization (LLMO)

What is Large Language Model Optimization (LLMO)?

Large Language Model Optimization (LLMO) refers to the practice of structuring digital content so it can be accurately understood and included in responses generated by AI systems powered by large language models. Unlike traditional SEO, which focuses on rankings, LLMO focuses on improving content visibility within AI-generated answers.

How is LLMO different from traditional SEO?

Traditional SEO aims to improve a website’s ranking in search engine results pages, while LLMO focuses on increasing the likelihood that content will be interpreted, summarized, or cited by AI-powered search and conversational systems.

Why is LLM optimization becoming important?

AI-driven search experiences increasingly provide direct answers instead of lists of links. As users interact with conversational AI systems, content visibility depends on whether information is selected by large language models during response generation.

What types of platforms use large language models for search?

Large language models are used in AI assistants, conversational search interfaces, AI overview systems, and answer engines that generate summarized responses using information collected from multiple web sources.

What factors influence content inclusion in AI-generated answers?

Key factors include semantic clarity, topical authority, structured formatting, factual accuracy, entity recognition, and consistency across related content topics.

Does LLMO replace search engine optimization?

No. Large Language Model Optimization is generally considered an extension of SEO rather than a replacement. Traditional optimization practices such as high-quality content and technical accessibility remain important foundations.

How does structured data help LLM visibility?

Structured data helps AI systems interpret relationships between topics, entities, and information. Proper formatting improves machine readability, making it easier for AI models to understand and reference content.

What is the relationship between LLMO and Generative Engine Optimization (GEO)?

Both concepts address optimization for AI-generated search experiences. GEO typically focuses on generative search engines, while LLMO emphasizes how large language models interpret and synthesize information.

Can websites guarantee inclusion in AI-generated responses?

No optimization method can guarantee inclusion. AI-generated answers depend on multiple factors such as model training, source credibility, contextual relevance, and query interpretation.

What is the future of Large Language Model Optimization?

As AI-assisted search continues to evolve, optimization practices are expected to focus more on entity authority, knowledge representation, structured publishing, and trustworthy information sources.

Conclusion

The transition from link-based search results to AI-generated answers represents a fundamental shift in online information discovery. Large Language Model Optimization reflects ongoing efforts to adapt digital content for machine interpretation, contextual understanding, and trustworthy synthesis within AI systems.

As generative technologies continue to influence how users access information, understanding the relationship between structured content and AI visibility is likely to become an essential component of modern web publishing.